- Course

Managing Big Data with AWS Storage Options

Discover solutions offered by Amazon Web Services for collecting, processing, and storing big data coming in from different sources, to derive insights about your businesses and your customers

- Course

Managing Big Data with AWS Storage Options

Discover solutions offered by Amazon Web Services for collecting, processing, and storing big data coming in from different sources, to derive insights about your businesses and your customers

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Cloud

- Data

What you'll learn

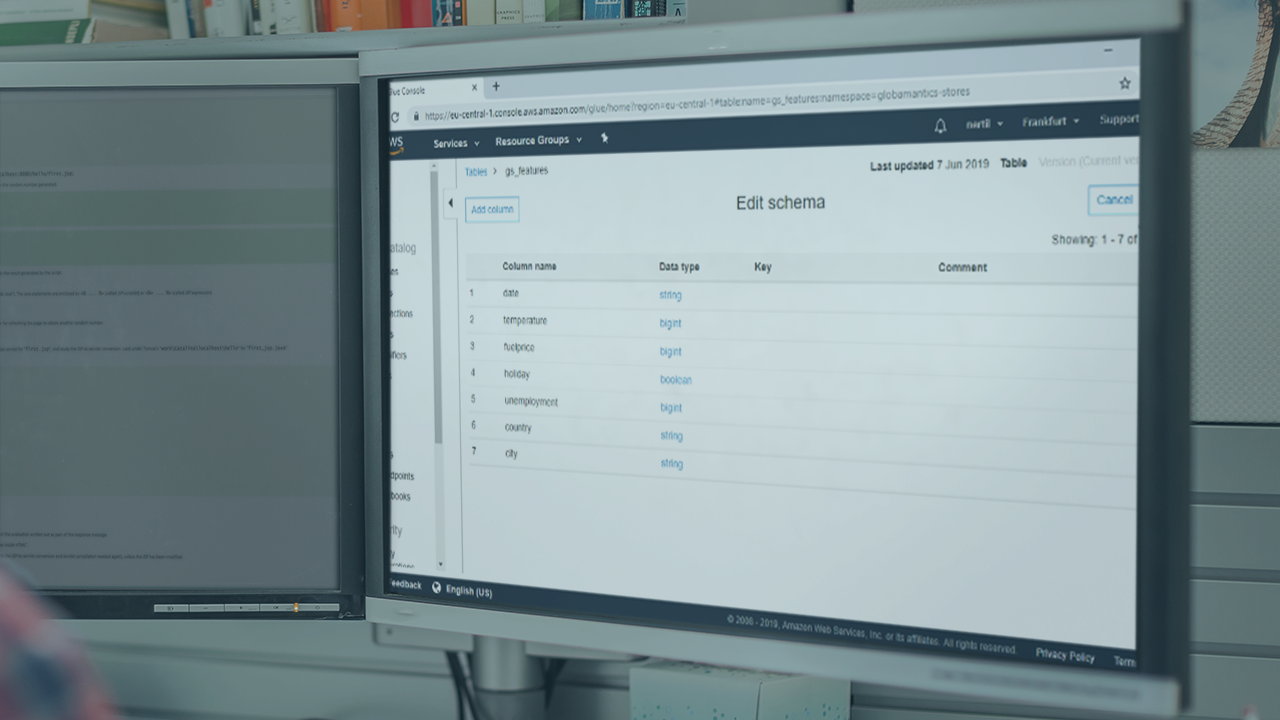

In the modern world, data has become a critical factor in companies making decisions about their business and understanding their customers. But making sense of this data is no small or cheap task. In this course, Managing Big Data with AWS Storage Options, you will learn how to process large amounts of data generated by your company using Amazon Web Services. First, you will learn how to collect, process and store petabytes of data into Amazon Redshift using AWS Glue. Next, you will discover how to process and store real-time data using Amazon Kinesis Data Firehose. Finally, you will explore how to use Amazon Elastic Map Reduce to provision clusters and perform data analysis on your big data, by turning it into meaningful insights. At the end of this course, you will be able to use Amazon Web Services to set up an infrastructure to collect and analyze petabytes of data, to take your business to the next level. In order to get the most out of this course, you will need to have an AWS account to follow the exercises demonstrated.