- Course

The Building Blocks of Hadoop - HDFS, MapReduce, and YARN

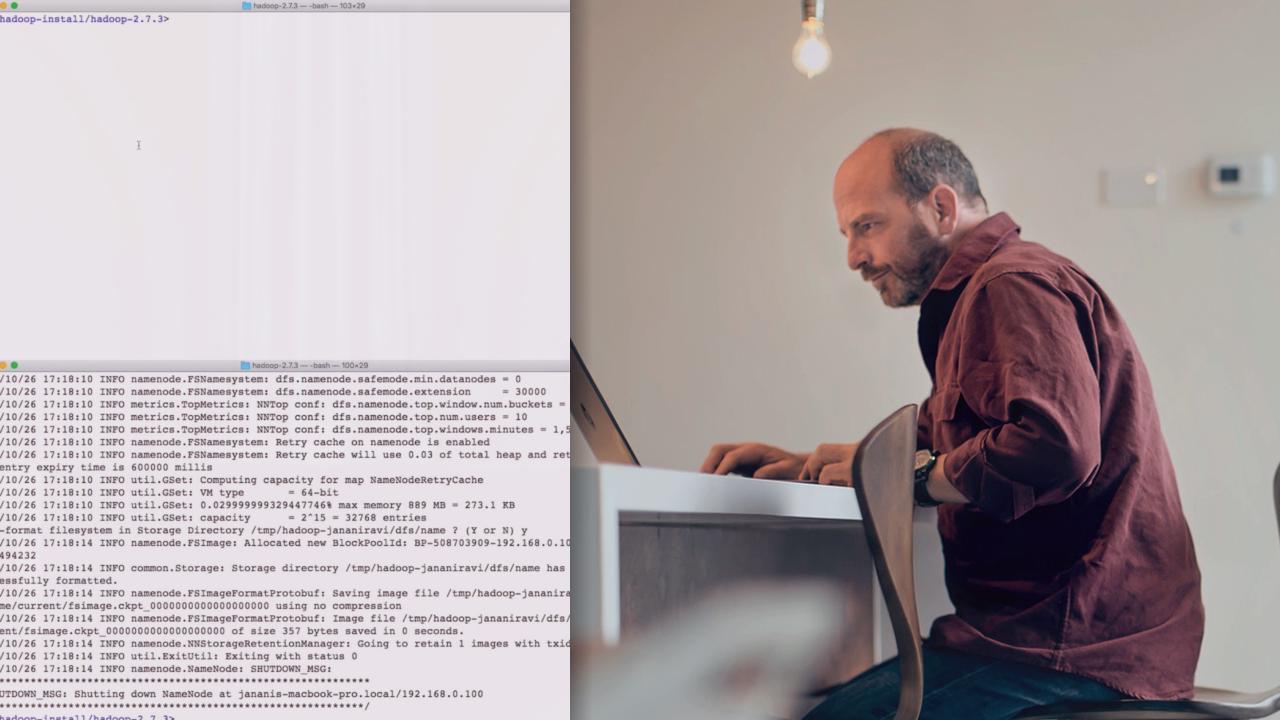

Processing billions of records requires a deep understanding of distributed computing. In this course, you'll get introduced to Hadoop, an open-source distributed computing framework that can help you do just that.

- Course

The Building Blocks of Hadoop - HDFS, MapReduce, and YARN

Processing billions of records requires a deep understanding of distributed computing. In this course, you'll get introduced to Hadoop, an open-source distributed computing framework that can help you do just that.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Data

What you'll learn

You know how to write Java code and you know what processing you want to perform on your huge dataset. But, can you use the Hadoop distributed framework effectively to get your work done? This course, The Building Blocks of Hadoop HDFS, MapReduce, and YARN, gives you a fundamental understanding of the building blocks of Hadoop: HDFS for storage, MapReduce for processing, and YARN for cluster management, to help you bridge the gap between programming and big data analysis. First, you'll get a complete architecture overview for Hadoop. Next, you'll learn how to set up a pseudo-distributed Hadoop environment and submit and monitor tasks on that environment. And finally, you'll understand the configuration choices you can make for stability, reliability optimized task scheduling on your distributed system. By the end of this course you'll have gained a strong understanding of the building blocks needed in order for you to use Hadoop effectively.