- Course

Building Features from Text Data

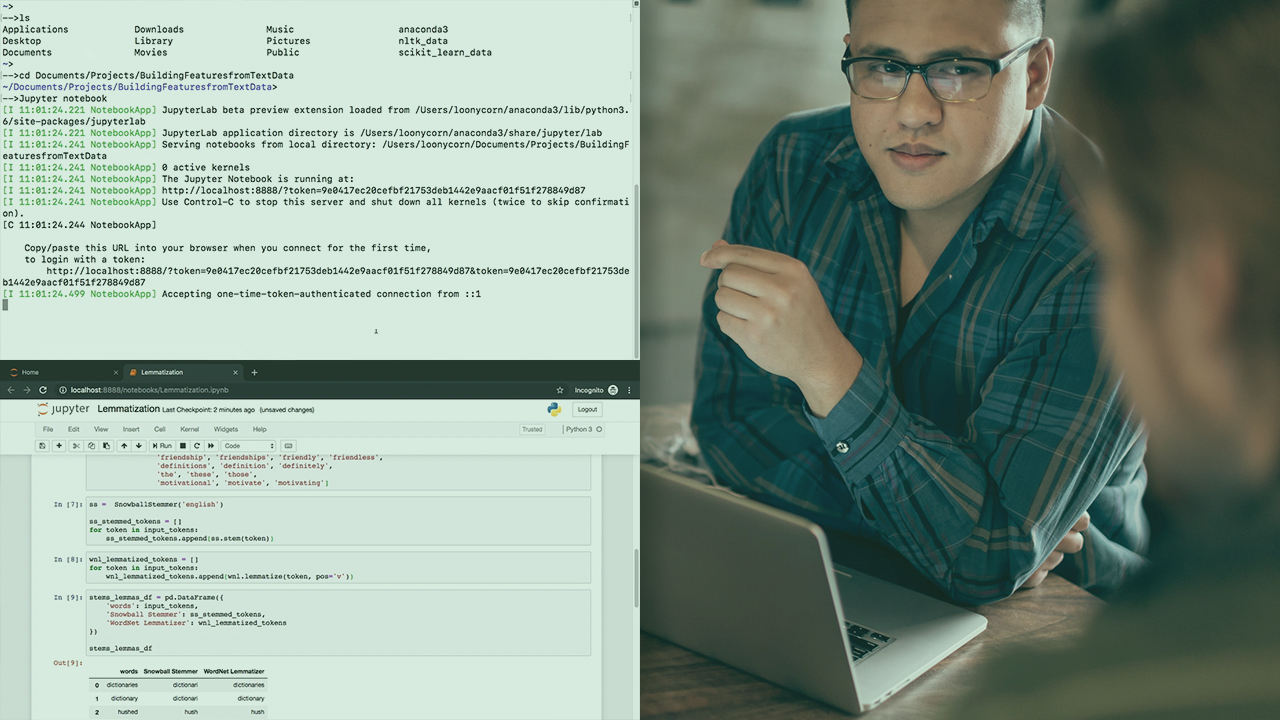

This course covers aspects of extracting information from text documents and constructing classification models including feature vectorization, locality-sensitive hashing, stopword removal, lemmatization, and more from natural language processing.

- Course

Building Features from Text Data

This course covers aspects of extracting information from text documents and constructing classification models including feature vectorization, locality-sensitive hashing, stopword removal, lemmatization, and more from natural language processing.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- AI

- Data

What you'll learn

From chatbots to machine-generated literature, some of the hottest applications of ML and AI these days are for data in textual form.

In this course, Building Features from Text Data, you will gain the ability to structure textual data in a manner ideal for use in ML models.

First, you will learn how to represent documents as feature vectors using one-hot encoding, frequency-based, and prediction-based techniques. You will see how to improve these representations based on the meaning, or semantics, of the document.

Next, you will discover how to leverage various language modeling features such as stopword removal, frequency filtering, stemming and lemmatization, and parts-of-speech tagging.

Finally, you will see how locality-sensitive hashing can be used to reduce the dimensionality of documents while still keeping similar documents close together.

You will round out the course by implementing a classification model on text documents using many of these modeling abstractions.

When you’re finished with this course, you will have the skills and knowledge to use documents and textual data in conceptually and practically sound ways and represent such data for use in machine learning models.

Building Features from Text Data

-

Version Check | 16s

-

Module Overview | 1m 16s

-

Prerequisites and Course Outline | 1m 17s

-

One-hot Encoding | 4m 24s

-

Count Vectors | 3m

-

Tf-Idf Vectors | 3m

-

Co-occurence Vectors | 5m 5s

-

Word Embeddings | 5m 11s

-

Installing Packages and Setting Up the Environment | 3m 8s

-

Sentence and Word Tokenization | 5m 28s

-

Plotting Word Frequency Distributions | 4m 1s

-

Module Summary | 1m 12s