- Course

Building Neural Networks with scikit-learn

This course covers all the important aspects of support currently available in scikit-learn for the construction and training of neural networks, including the perceptron, MLPClassifier, and MLPRegressor, as well as Restricted Boltzmann Machines.

- Course

Building Neural Networks with scikit-learn

This course covers all the important aspects of support currently available in scikit-learn for the construction and training of neural networks, including the perceptron, MLPClassifier, and MLPRegressor, as well as Restricted Boltzmann Machines.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- AI

- Data

What you'll learn

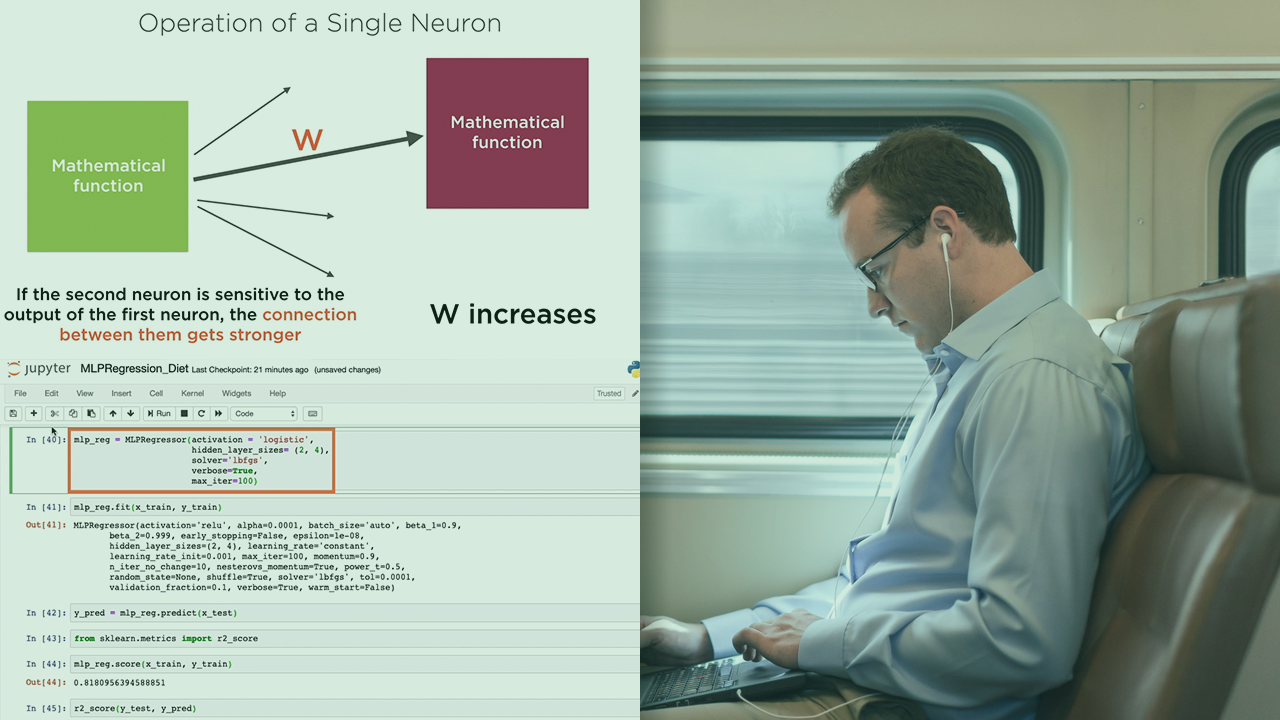

Even as the number of machine learning frameworks and libraries increases on a daily basis, scikit-learn is retaining its popularity with ease. The one domain where scikit-learn is distinctly behind competing frameworks is in the construction of neural networks for deep learning. In this course, Building Neural Networks with scikit-learn, you will gain the ability to make the best of the support that scikit-learn does provide for deep learning. First, you will learn precisely what gaps exist in scikit-learn’s support for neural networks, as well as how to leverage constructs such as the perceptron and multi-layer perceptrons that are made available in scikit-learn. Next, you will discover how perceptrons are just neurons with step activation, and multi-layer perceptrons are effectively feed-forward neural networks. Then, you'll use scikit-learn estimator objects for neural networks to build regression and classification models, working with numeric, text, and image data. Finally, you will use Restricted Boltzmann Machines to perform dimensionality reduction on data before feeding it into a machine learning model. When you’re finished with this course, you will have the skills and knowledge to leverage every bit of support that scikit-learn currently has to offer for the construction of neural networks.

Building Neural Networks with scikit-learn

-

Version Check | 16s

-

Module Overview | 1m 9s

-

Prerequisites and Course Outline | 1m 41s

-

Support for Neural Networks in scikit-learn | 4m 48s

-

Perceptrons and Neurons | 7m

-

Multi-layer Perceptrons and Neural Networks | 2m 48s

-

Training a Neural Network | 5m 21s

-

Overfitting and Underfitting | 2m 59s

-

Module Summary | 1m 26s