- Course

Create and Monitor Data Pipelines for a Batch Processing Solution

Data analytics at the serving layer come easy with a well designed and implemented data process. This course will teach you key considerations and design principles of creating and monitoring data pipelines for a batch processing solution.

- Course

Create and Monitor Data Pipelines for a Batch Processing Solution

Data analytics at the serving layer come easy with a well designed and implemented data process. This course will teach you key considerations and design principles of creating and monitoring data pipelines for a batch processing solution.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Cloud

- Data

What you'll learn

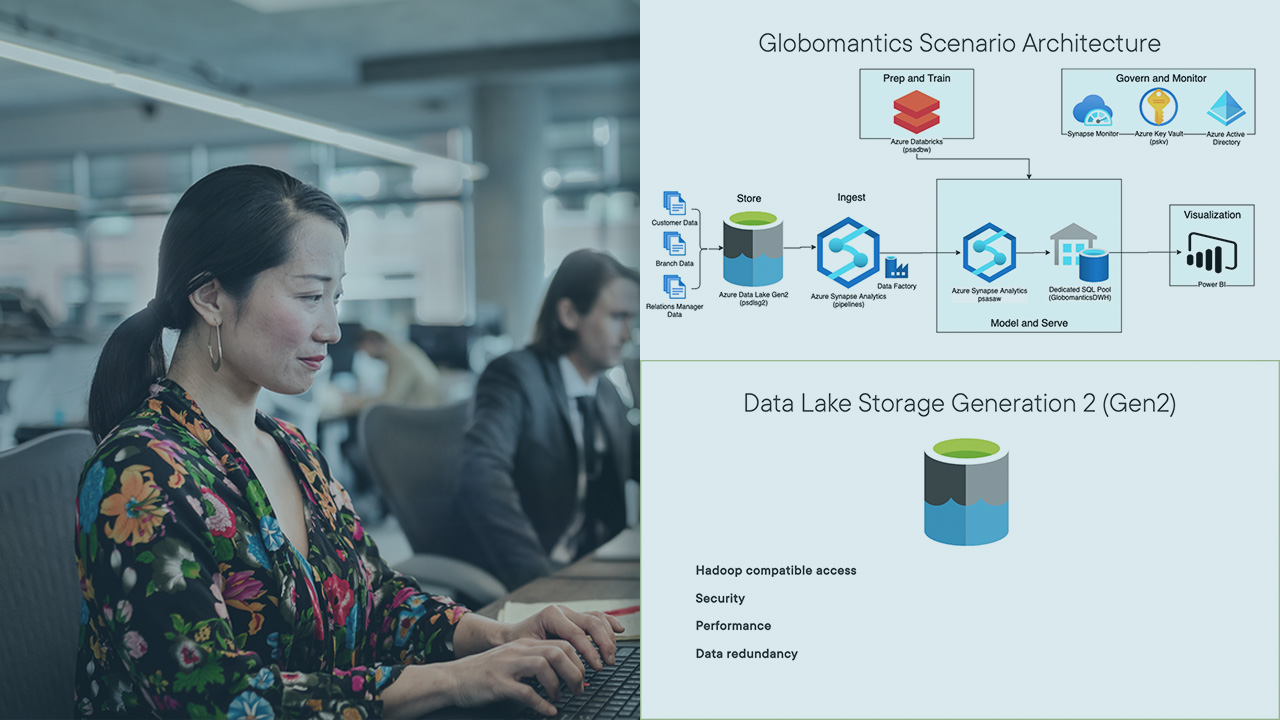

As a data specialist, you may be required to design and implement an end-to-end data pipeline for a batch processing solution. In this course, Create and Monitor Data Pipelines for a Batch Processing Solution, you’ll learn to design and implement data pipelines for a batch processing solution. First, you’ll explore the available data storages on Azure. Next, you’ll discover and develop batch processing solutions using Azure Data Factory and the available data storages. Finally, you’ll learn how to automate the data processing process and how to monitor for optimization and efficiency. When you’re finished with this course, you’ll have the skills and knowledge of a data professional needed to build and monitor end-to-end data pipelines.