- Course

Developing with .NET on Microsoft Azure - Getting Started

This course gets you started with everything you need to develop and deploy .NET web applications and services in Microsoft Azure.

- Course

Developing with .NET on Microsoft Azure - Getting Started

This course gets you started with everything you need to develop and deploy .NET web applications and services in Microsoft Azure.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Cloud

What you'll learn

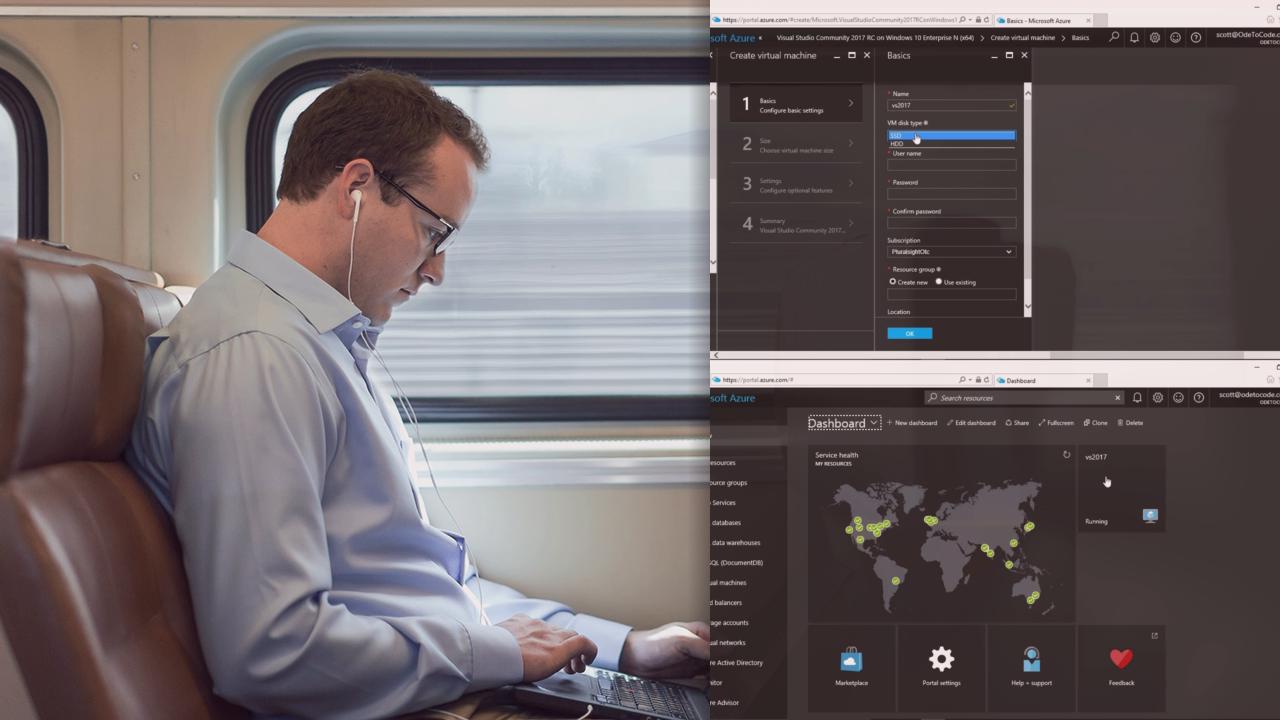

Hello, and welcome to Developing with .NET on Microsoft Azure - Getting Started, part of the .NET Developer on Microsoft Azure Learning Path here at Pluralsight. My name is Scott Allen, and I’m looking forward to helping you understand the breadth of Azure resource offerings and supported technologies and get your ASP.NET application set up quickly and deployed to the cloud.

Along the way, you’re going to be learning about things like how to scale, monitor, and troubleshoot that application as well has learning how to work with databases using the Azure SQL database and DocumentDB platforms. You’ll want to make sure you’re already up to speed on ASP.NET application development before starting this course.

So if you’re ready to get started, Developing with .NET on Microsoft Azure - Getting Started is waiting for you. Thanks again for visiting me here at Pluralsight!