- Course

Getting Started with Apache Spark on Databricks

This course will introduce you to analytical queries and big data processing using Apache Spark on Azure Databricks. You will learn how to work with Spark transformations, actions, visualizations, and functions using the Databricks Runtime.

- Course

Getting Started with Apache Spark on Databricks

This course will introduce you to analytical queries and big data processing using Apache Spark on Azure Databricks. You will learn how to work with Spark transformations, actions, visualizations, and functions using the Databricks Runtime.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Cloud

- Data

What you'll learn

Azure Databricks allows you to work with big data processing and queries using the Apache Spark unified analytics engine. With Azure Databricks you can set up your Apache Spark environment in minutes, autoscale your processing, and collaborate and share projects in an interactive workspace.

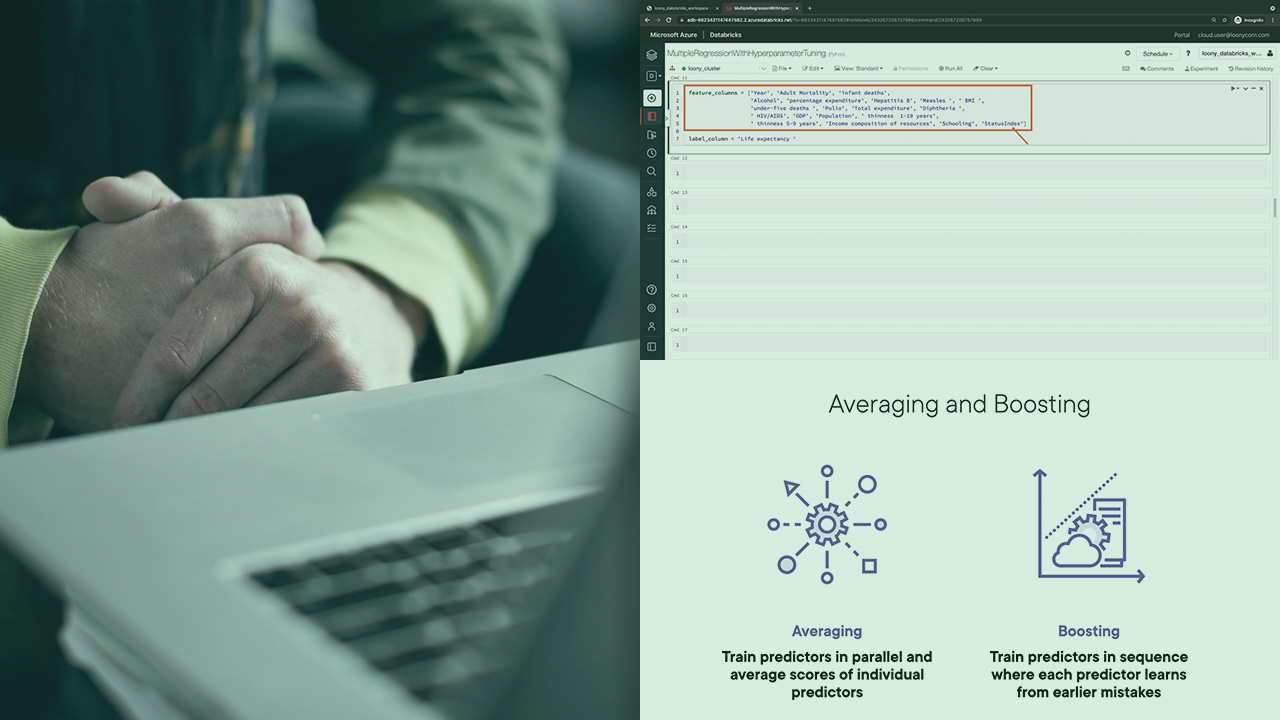

In this course, Getting Started with Apache Spark on Databricks, you will learn the components of the Apache Spark analytics engine which allows you to process batch as well as streaming data using a unified API. First, you will learn how the Spark architecture is configured for big data processing, you will then learn how the Databricks Runtime on Azure makes it very easy to work with Apache Spark on the Azure Cloud Platform and will explore the basic concepts and terminology for the technologies used in Azure Databricks.

Next, you will learn the workings and nuances of Resilient Distributed Datasets also known as RDDs which is the core data structure used for big data processing in Apache Spark. You will see that RDDs are the data structures on top of which Spark Data frames are built. You will study the two types of operations that can be performed on Data frames - namely transformations and actions and understand the difference between them. You’ll also learn how Databricks allows you to explore and visualize your data using the display() function that leverages native Python libraries for visualizations.

Finally, you will get hands-on experience with big data processing operations such as projection, filtering, and aggregation operations. Along the way, you will learn how you can read data from an external source such as Azure Cloud Storage and how you can use built-in functions in Apache Spark to transform your data.

When you are finished with this course you will have the skills and ability to work with basic transformations, visualizations, and aggregations using Apache Spark on Azure Databricks.

Getting Started with Apache Spark on Databricks

-

Version Check | 15s

-

Prequisites and Course Outline | 2m 26s

-

Introducing Apache Spark | 5m 21s

-

Spark Architecture | 4m 46s

-

Introducing Databricks | 2m 59s

-

Databricks Science and Engineering Concepts | 6m 35s

-

Azure Databricks Architectural Overview | 4m 15s

-

Demo: Creating an Azure Databricks Workspace | 2m 35s

-

Demo: Provisionsing an All Purpose Cluster | 5m 16s