- Course

Architecting Data Warehousing Solutions Using Google BigQuery

BigQuery is the Google Cloud Platform’s data warehouse on the cloud. In this course, you’ll learn how you can work with BigQuery on huge datasets with little to no administrative overhead.

- Course

Architecting Data Warehousing Solutions Using Google BigQuery

BigQuery is the Google Cloud Platform’s data warehouse on the cloud. In this course, you’ll learn how you can work with BigQuery on huge datasets with little to no administrative overhead.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Cloud

- Data

What you'll learn

Organizations store massive amounts of data that gets collated from a wide variety of sources. BigQuery supports fast querying at a petabyte scale, with serverless functionality and autoscaling. BigQuery also supports streaming data, works with visualization tools, and interacts seamlessly with Python scripts running from Datalab notebooks.

In this course, Architecting Data Warehousing Solutions Using Google BigQuery, you’ll learn how you can work with BigQuery on huge datasets with little to no administrative overhead related to cluster and node provisioning.

First, you'll start off with an overview of the suite of storage products on the Google Cloud and the unique position that BigQuery holds. You’ll see how BigQuery compares with Cloud SQL, BigTable, and Datastore on the GCP and how it differs from Amazon Redshift, the data warehouse on AWS.

Next, you’ll create datasets in BigQuery which are the equivalent of databases in RDMBSes and create tables within datasets where actual data is stored. You’ll work with BigQuery using the web console as well as the command line. You’ll load data into BigQuery tables using the CSV, JSON, and AVRO format and see how you can execute and manage jobs.

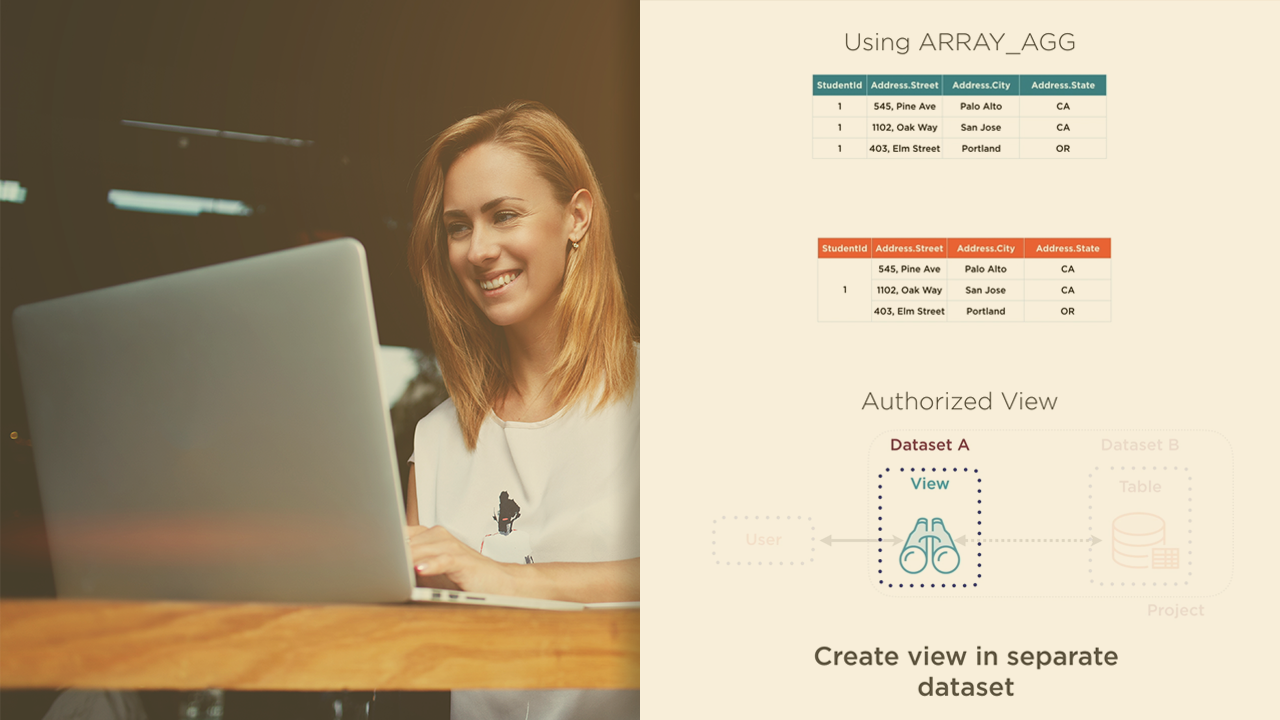

Finally, you'll wrap up by exploring advanced analytical queries which use nested and repeated fields. You’ll run aggregate operations on your data and use advanced windowing functions as well. You’ll programmatically access BigQuery using client libraries in Python and visualize your data using Data Studio.

At the end of this course, you'll be comfortable working with huge datasets stored in BigQuery, executing analytical queries, performing analysis, and building charts and graphs for your reports.

Architecting Data Warehousing Solutions Using Google BigQuery

-

Module Overview | 2m 4s

-

Prerequisites and Course Outline | 3m 2s

-

Transactional and Analytical Processing | 5m 4s

-

Introducing BigQuery | 5m 18s

-

Choosing BigQuery | 5m 52s

-

BigQuery Pricing | 5m 2s

-

Logging into the GCP and Enabling APIs | 2m 14s

-

Beta UI and Classic UI | 2m 15s

-

Public Datasets | 3m 32s

-

Executing Queries | 4m 1s

-

Working with the bq Command on the Terminal | 4m 22s