- Course

Handling Streaming Data with AWS Kinesis Data Analytics Using Java

Kinesis Data Analytics is a service to transform and analyze streaming data with Apache Flink and SQL using serverless technologies. You'll learn to use the Amazon Kinesis Data Analytics service to process streaming data using Apache Flink runtime.

- Course

Handling Streaming Data with AWS Kinesis Data Analytics Using Java

Kinesis Data Analytics is a service to transform and analyze streaming data with Apache Flink and SQL using serverless technologies. You'll learn to use the Amazon Kinesis Data Analytics service to process streaming data using Apache Flink runtime.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Cloud

- Data

What you'll learn

Kinesis Data Analytics is part of the Kinesis streaming platform along with Kinesis Data Streams, Kinesis Data Firehose, and Kinesis Video streams.

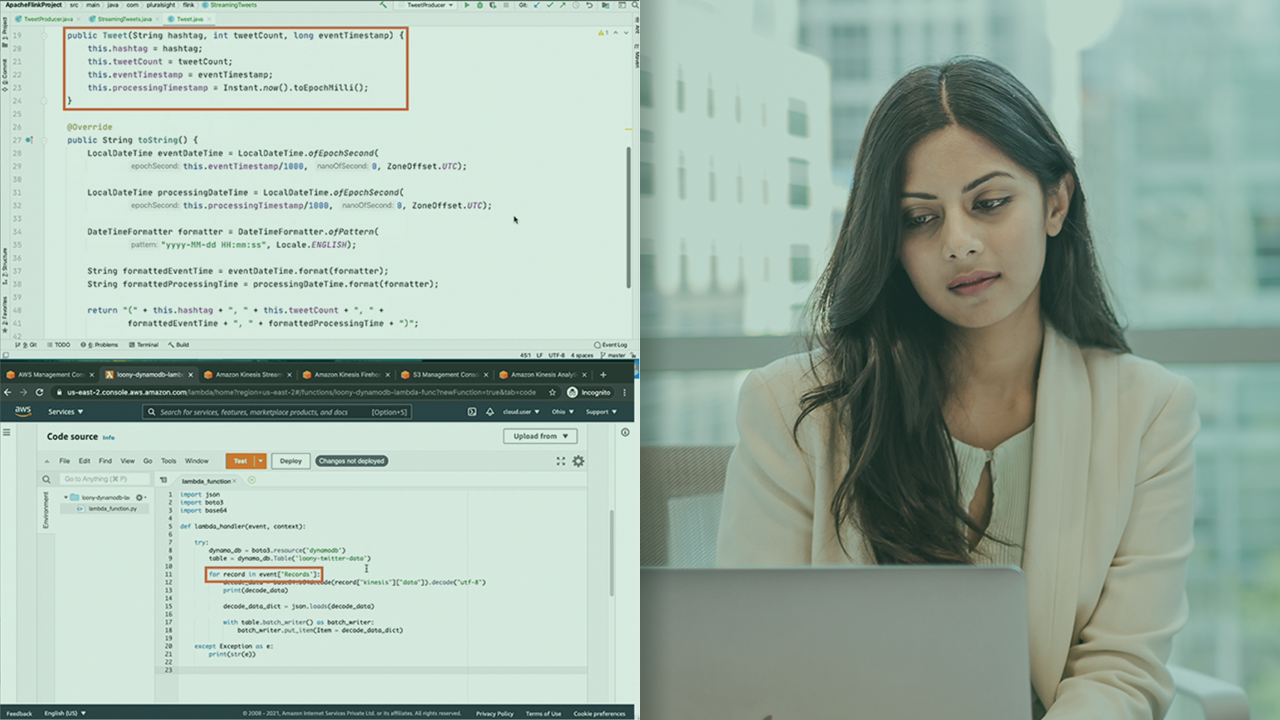

In this course, Handling Streaming Data with AWS Kinesis Data Analytics Using Java, you'll work with live Twitter feeds to process real-time streaming data. First, you'll create a developer account on the Twitter platform and generate authentication keys and tokens to access the Twitter streaming API. You'll then write code to access these tweets as streaming messages and publish them to Kinesis Data Streams which can be used as a source of streaming data in Kinesis Data Analytics.

Next, you'll run Kinesis Data Analytics applications using the Apache Flink runtime to process tweets. You'll deploy these applications using the web console as well as the command line. You'll set up the right permissions, and configure these applications to use cloud monitoring and logging, and see how you can use log messages to debug errors in your applications.

Finally, you'll perform a number of different processing operations on streaming tweets, windowing operations using tumbling and sliding windows. You'll apply global windows with count triggers, and continuous-time triggers. You'll implement join operations and create branching pipelines to sink some results to DynamoDB and other results to S3.

When you're finished with this course, you'll have the skills and knowledge to create and deploy streaming applications that process live streams such as Twitter messages.

Handling Streaming Data with AWS Kinesis Data Analytics Using Java

-

Version Check | 15s

-

Prerequisites and Course Outline | 2m 50s

-

Kinesis Data Analytics and Connectors | 3m 44s

-

Demo: Setting up the Local Environment | 5m 50s

-

Demo: Setting up an Apache Maven Project | 3m 33s

-

Demo: Setting up a Twitter Developer Account and Generating Keys | 4m 51s

-

Demo: Publishing Twitter Messages to Kinesis Data Streams | 4m 41s

-

Demo: Processing Tweets Using Apache Flink | 3m 46s

-

Demo: Creating a Kinesis Data Analytics Application | 3m 36s

-

Demo: Configuring Policies for the Kinesis Data Analytics Application | 2m 52s

-

Demo: Running the Kinesis Data Analytics Application | 4m 27s

-

Demo: Updating Starting and Stopping Application Using the CLI | 4m 32s

-

Demo: Sinking Processed Results to a Kinesis Data Stream | 4m 41s

-

Demo: Configuring Application Runtime Properties | 3m 59s

-

Demo: Configuring a Kinesis Firehose Delivery Stream to Deliver Results to S3 | 5m 53s