- Course

Writing Complex Analytical Queries with Hive

Hive is a data warehouse that runs on top of the Hadoop distributed computing framework. It works on huge datasets, so this course is useful for understanding its features so you can write efficient, fast, and optimal queries.

- Course

Writing Complex Analytical Queries with Hive

Hive is a data warehouse that runs on top of the Hadoop distributed computing framework. It works on huge datasets, so this course is useful for understanding its features so you can write efficient, fast, and optimal queries.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Data

What you'll learn

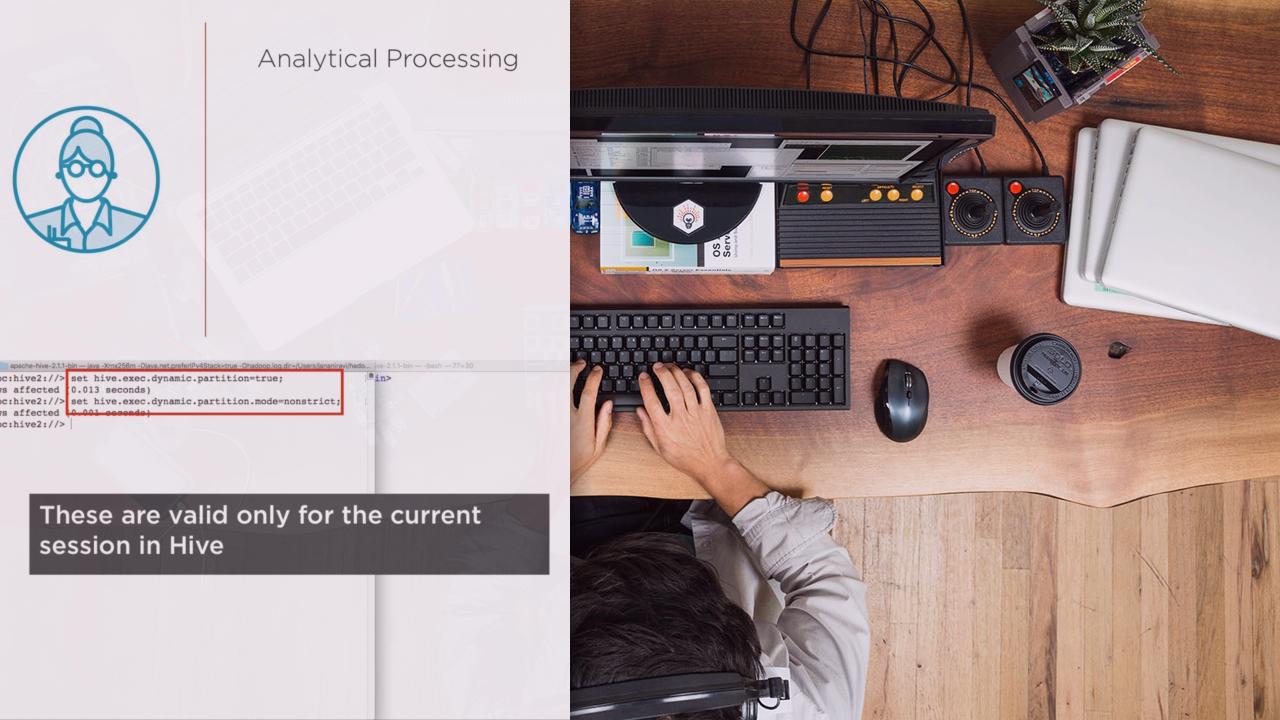

The Hive data warehouse supports analytical processing, it generally processes long-running jobs which crunch a huge amount of data. By understanding what goes on behind the scenes in Hive, you can structure your Hive queries to be optimal and performant, thus making your data analysis very efficient. In this course, Writing Complex Analytical Queries with Hive, you'll discover how to make design decisions and how to lay out data in your Hive tables. First, you'll dive into partitioning and bucketing, which are ways to reduce the data a query has to process. You'll cover how and when you use partitioning, bucketing, or both when you set up your tables. Next, you'll be introduced to the joins operation, along with covering how to deal with large tables, and run and optimize map-only joins. Lastly, you'll learn windowing functions, which allow you to write complex queries simply and easily with no intermediate tables. An important optimization with large datasets. By the end of this course, you'll develop an understanding for the little details that makes writing complex queries easier and faster.

Writing Complex Analytical Queries with Hive

-

Version Check | 21s

-

Introduction and Prerequisites for This Course | 1m 39s

-

A Data Warehouse for Analytical Processing | 4m 6s

-

Hive as a Data Warehouse | 3m 14s

-

Managing Huge Datasets and Writing Faster Queries | 2m 58s

-

A Brief Introduction: Bucketing and Partitioning | 3m 36s

-

A Brief Introduction: Join Optimizations | 2m 52s

-

A Brief Introduction: Window Functions | 3m 8s