- Course

Integrating SQL and ETL Tools with Databricks

Databricks can be made much easier to adopt if it can be seamlessly integrated into a development environment. This course looks into how this can be accomplished for the SQL Workbench/J client and the Prophecy service.

- Course

Integrating SQL and ETL Tools with Databricks

Databricks can be made much easier to adopt if it can be seamlessly integrated into a development environment. This course looks into how this can be accomplished for the SQL Workbench/J client and the Prophecy service.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Cloud

- Data

What you'll learn

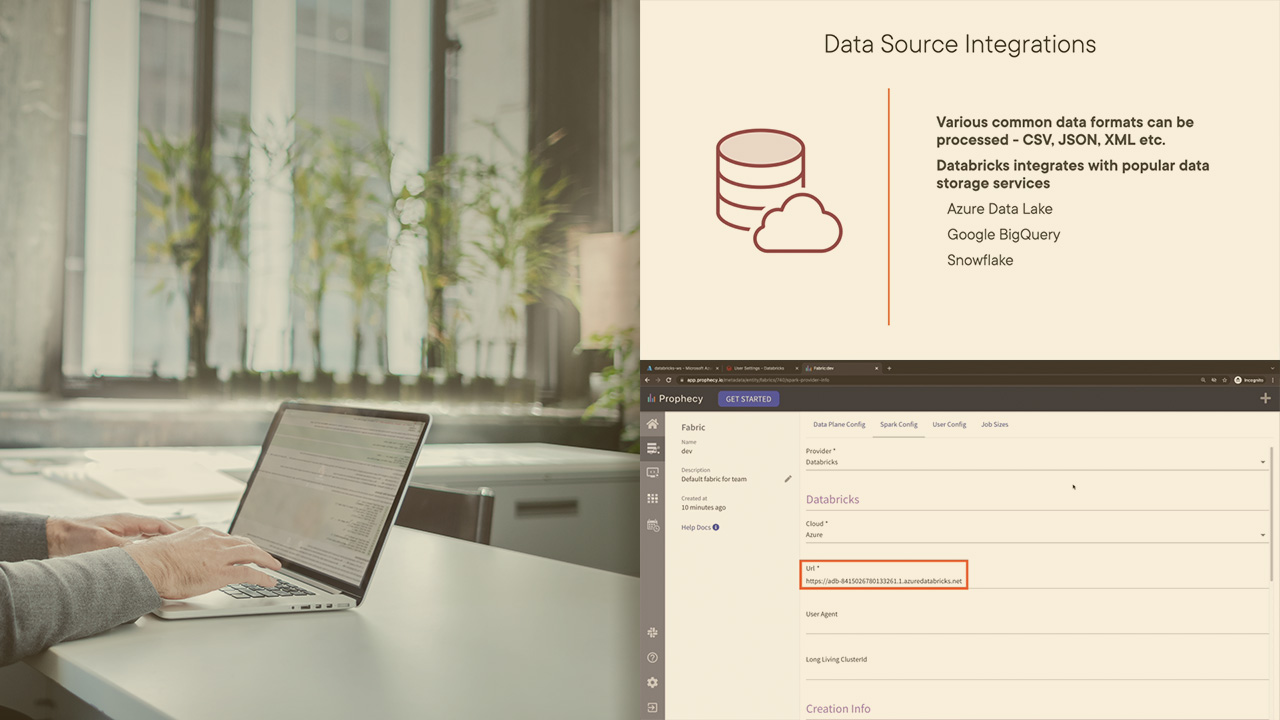

For any organization which uses Databricks, integrating this big data platform into their own tool environments can prove a complex task. In this course, Integrating SQL and ETL Tools with Databricks, you'll learn how Databricks looks into two specific tools - SQL Workbench/J and Prophecy - and links them within the Databricks workspace. First, you'll discover the need or tool integrations, how these can help engineers be more productive, and how these can avoid adding to the complexity of a tooling environment. Then, you'll explore linking an Azure Databricks workspace with a popular SQL client - namely SQL Workbench/J. Finally, you'll learn the steps involved in integrating a Prophecy workflow with Databricks. Once you complete this course, you will be well-versed with the types of integrations which are possible with Databricks, and how to link up two popular tools with this big data service.