- Course

Linux High Availability Cluster Management

Need to know how to improve the performance and reliability of your local or cloud Linux deployments? Discover the magic of high availability cluster computing.

- Course

Linux High Availability Cluster Management

Need to know how to improve the performance and reliability of your local or cloud Linux deployments? Discover the magic of high availability cluster computing.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Core Tech

What you'll learn

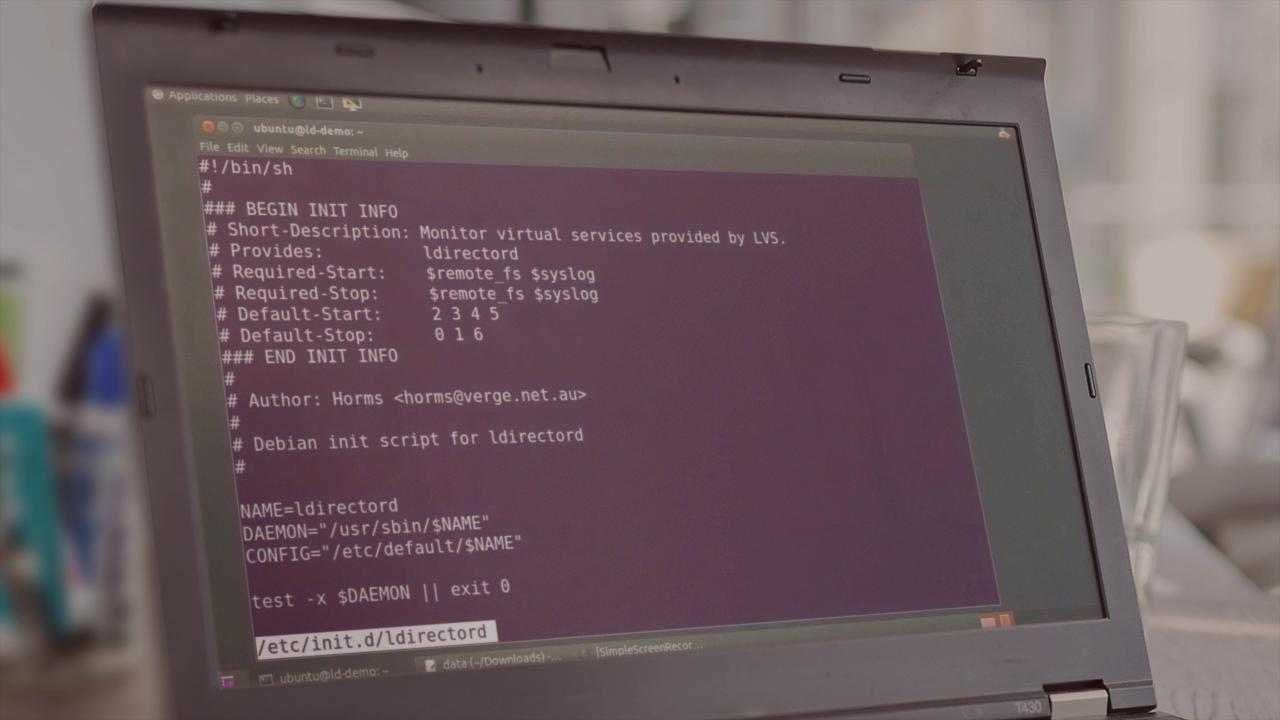

Running server operations using clusters of either physical or virtual computers is all about improving performance over and above what you could expect from a single, high-powered server. But "improving performance" can mean different things in different contexts. This course, Linux High Availability Cluster Management, will bring many aspects of performance improvement to light. You'll be introduced to the principles of Linux-based HA and cluster management and the key tools currently in use in real-world environments - including Linux Virtual Server (LVS), HAProxy, Pacemaker, DRBD, OCFS2, and GFS2. You'll learn how to intelligently spread workloads among diverse geographic and demand environments (load balancing). You'll also discover how to provide backup servers that can be quickly brought into service in the event a working node fails (failover). Finally, you'll also learn about optimizing the way your data tier is deployed, or allowing for fault tolerance through loosely coupled architectures. By the end of this course, you will be able to improve and manage many aspects of the performance of your local or cloud Linux deployments, and they'll be more reliable for it.