- Course

Making .NET Applications Even Faster

Learn how to make .NET applications even faster by reducing GC pressure, using SIMD instructions, optimizing for CPU utilization, and using .NET optimization technologies.

- Course

Making .NET Applications Even Faster

Learn how to make .NET applications even faster by reducing GC pressure, using SIMD instructions, optimizing for CPU utilization, and using .NET optimization technologies.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Core Tech

What you'll learn

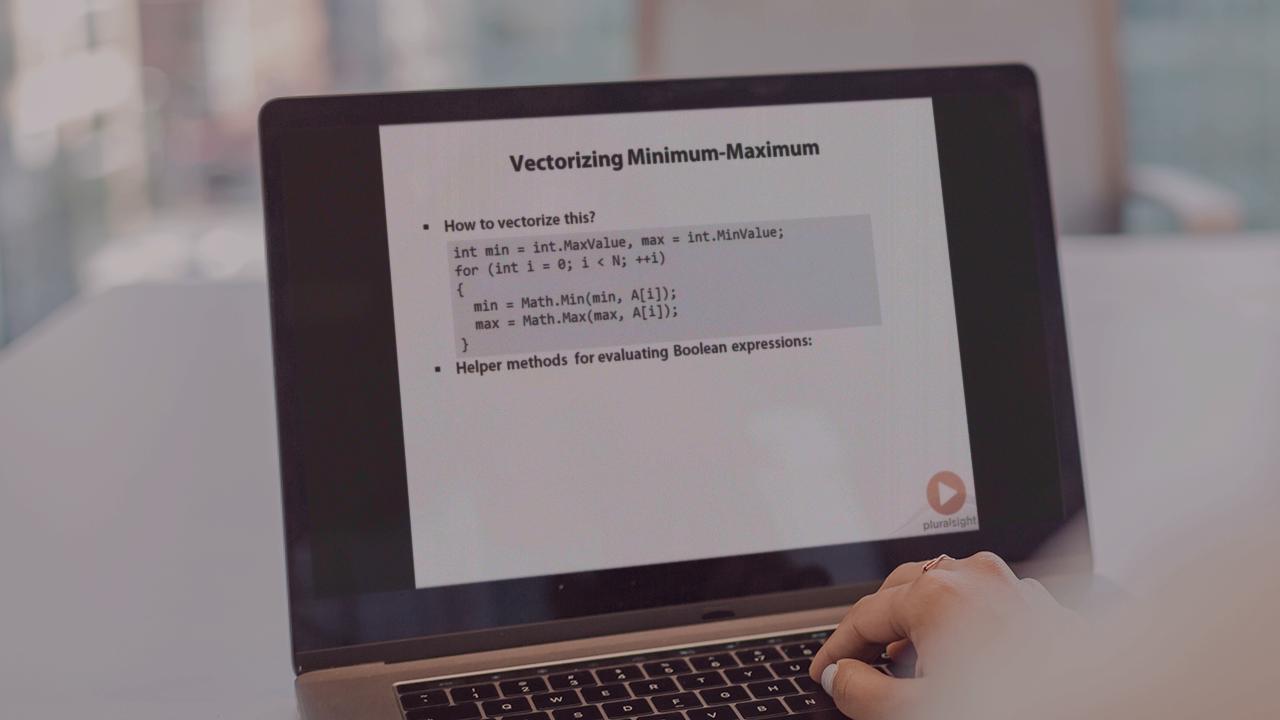

In this course, you will learn how to make .NET applications even faster by using a variety of techniques that expand upon the "Making .NET Applications Faster" course. You will explore the garbage collector's inner workings and how to use them to your advantage. You will learn about modern CPUs and how to optimize for them using vector instructions and cache optimization techniques. Finally, you will learn about relevant JIT optimizations and .NET Native, a preview .NET optimization technology.