- Course

Microsoft Azure Cognitive Services: Face API

Machine learning and artificial intelligence are enabling great change today. This course will introduce you to the Microsoft Azure Cognitive Services Face API, helping you understand how to incorporate its functionality into your own apps.

- Course

Microsoft Azure Cognitive Services: Face API

Machine learning and artificial intelligence are enabling great change today. This course will introduce you to the Microsoft Azure Cognitive Services Face API, helping you understand how to incorporate its functionality into your own apps.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- AI

- Cloud

What you'll learn

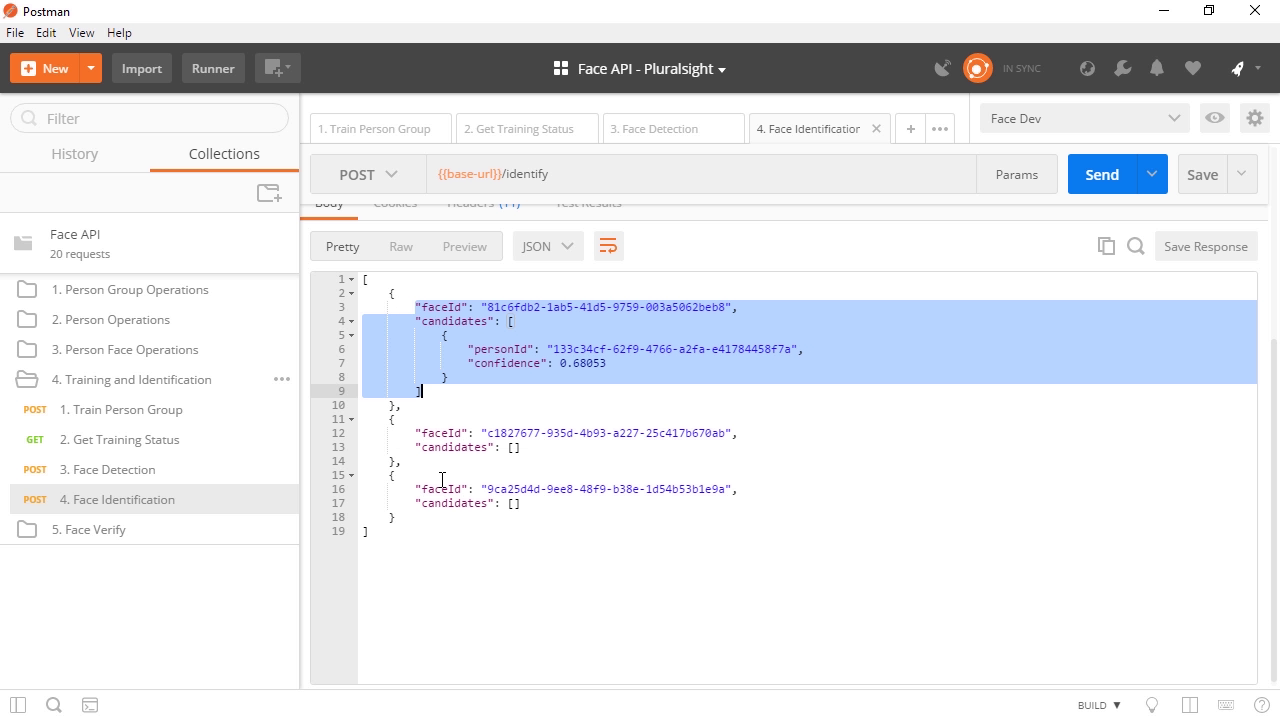

Artificial Intelligence is quickly becoming one of the most important technologies in the fast-moving world today. In this course, Microsoft Azure Cognitive Services: Face API, you will learn how to extract metadata from faces such as emotion, age, gender, and more. First, you will see how face detection works using direct http calls as well as with the SDK. Next, you will get familiar with the face identification workflow and how to use face identification in a Postman environment. Finally, you will see how to use the Face API Explorer on your local machine. By the end of this course, you will feel confident fully leveraging the Face API over HTTP which will translate easily into your preferred programming language. Software required: Microsoft Azure Cognitive Services Face API 1.0.