- Course

Deploying Data Pipelines in Microsoft Azure

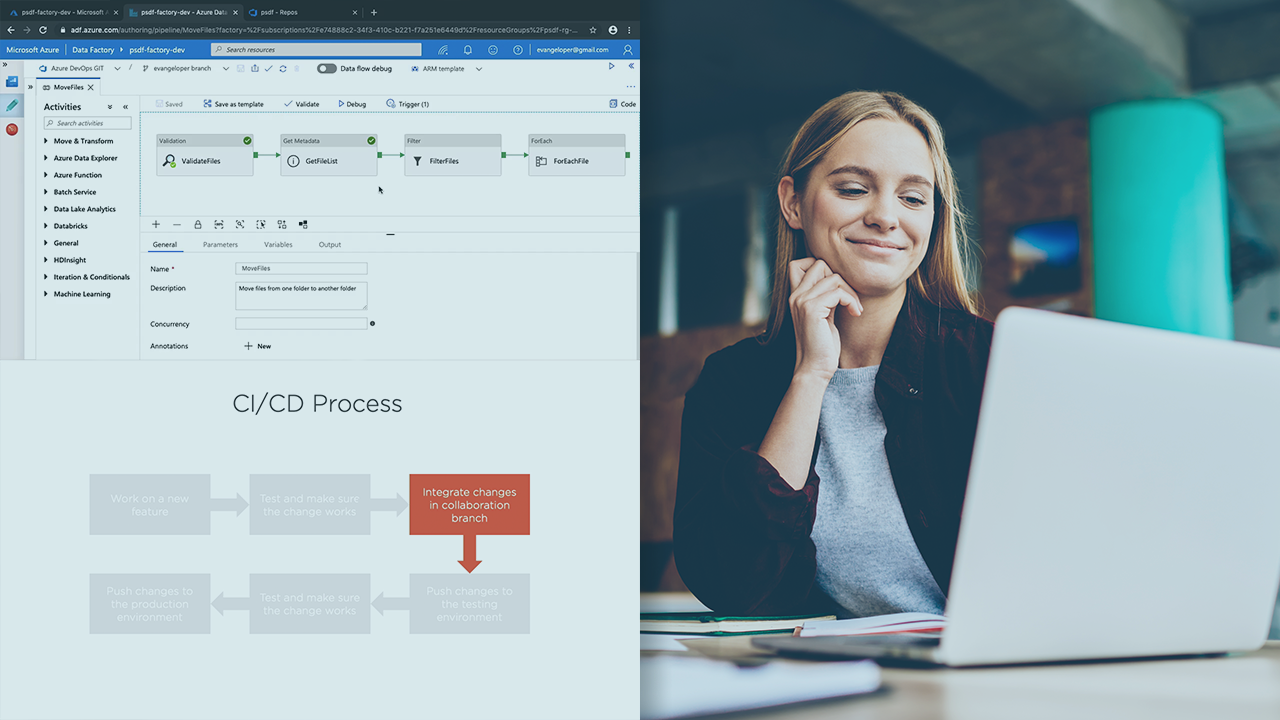

In this course, you will learn foundational knowledge needed to apply CI/CD methodologies to your pipeline creation process in Azure Data Factory to deploy robust and well-tested data pipelines to production.

- Course

Deploying Data Pipelines in Microsoft Azure

In this course, you will learn foundational knowledge needed to apply CI/CD methodologies to your pipeline creation process in Azure Data Factory to deploy robust and well-tested data pipelines to production.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Cloud

- Data

What you'll learn

Data engineers working with Azure Data Factory can take advantage of Continuous Integration and Continuous Delivery practices to deploy robust and well-tested data pipelines to production.

In this course, Deploying Data Pipelines in Microsoft Azure, you will learn foundational knowledge to apply CI/CD methodologies to your data pipeline creation process.

First, you will learn to create the right environments to fall into the pit of success when creating data pipelines in ADF.

Next, you will discover how to deploy data pipelines using ADF visual tools and ARM templates.

Finally, you will explore how to create a release pipeline in Azure DevOps to automate the deployment process between three distinct environments: development, staging, and production.

When you are finished with this course, you will have the skills and knowledge to apply CI/CD practices to your data pipeline creation process, effortlessly.