- Course

Monitor and Evaluate Model Performance During Training

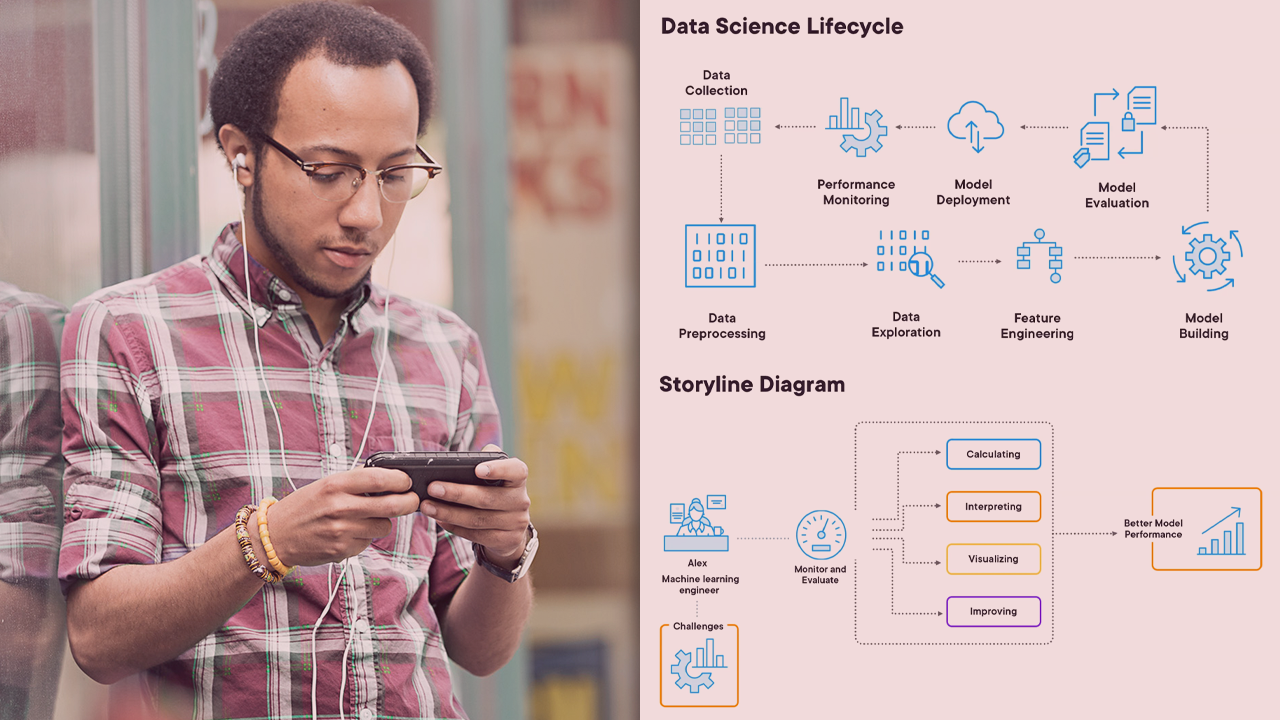

Enhance your machine-learning models! This course will teach you the tools and techniques to effectively monitor and evaluate model performance during training.

- Course

Monitor and Evaluate Model Performance During Training

Enhance your machine-learning models! This course will teach you the tools and techniques to effectively monitor and evaluate model performance during training.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- AI

- Data

What you'll learn

Ensuring that machine learning models perform optimally during training can be a challenging task, often leading to inefficiencies and inaccuracies in predictive outcomes. In this course, Monitor and Evaluate Model Performance During Training, you’ll gain the ability to effectively assess and enhance your machine learning models. First, you’ll explore the crucial metrics used for evaluating model performance, such as accuracy, precision, recall, F1 score, and the area under the ROC curve. Next, you’ll discover how to visualize training progress and understand the importance of loss curves, confusion matrices, and the use of ROC and precision-recall curves for binary classification. Finally, you’ll learn how to utilize real-time monitoring tools like TensorBoard, Weights & Biases, and MLflow to track and improve your model's training process. When you’re finished with this course, you’ll have the skills and knowledge of machine learning model evaluation needed to ensure your models are trained effectively, yielding reliable and robust predictive results.