- Course

How Neural Networks Learn: Exploring Architecture, Gradient Descent, and Backpropagation

Neural networks drive many artificial intelligence applications today. This course will teach you what’s behind the magic—the dynamics of training neural networks, including backpropagation, gradient descent, and how to optimize network performance.

- Course

How Neural Networks Learn: Exploring Architecture, Gradient Descent, and Backpropagation

Neural networks drive many artificial intelligence applications today. This course will teach you what’s behind the magic—the dynamics of training neural networks, including backpropagation, gradient descent, and how to optimize network performance.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- AI

- Data

What you'll learn

So, you understand neural networks conceptually—what they are and generally how they work. But you might still be wondering about all the details that actually make them work.

In this course, How Neural Networks Learn: Exploring Architecture, Gradient Descent, and Backpropagation, you’ll gain an understanding of the details required to build and train a neural network.

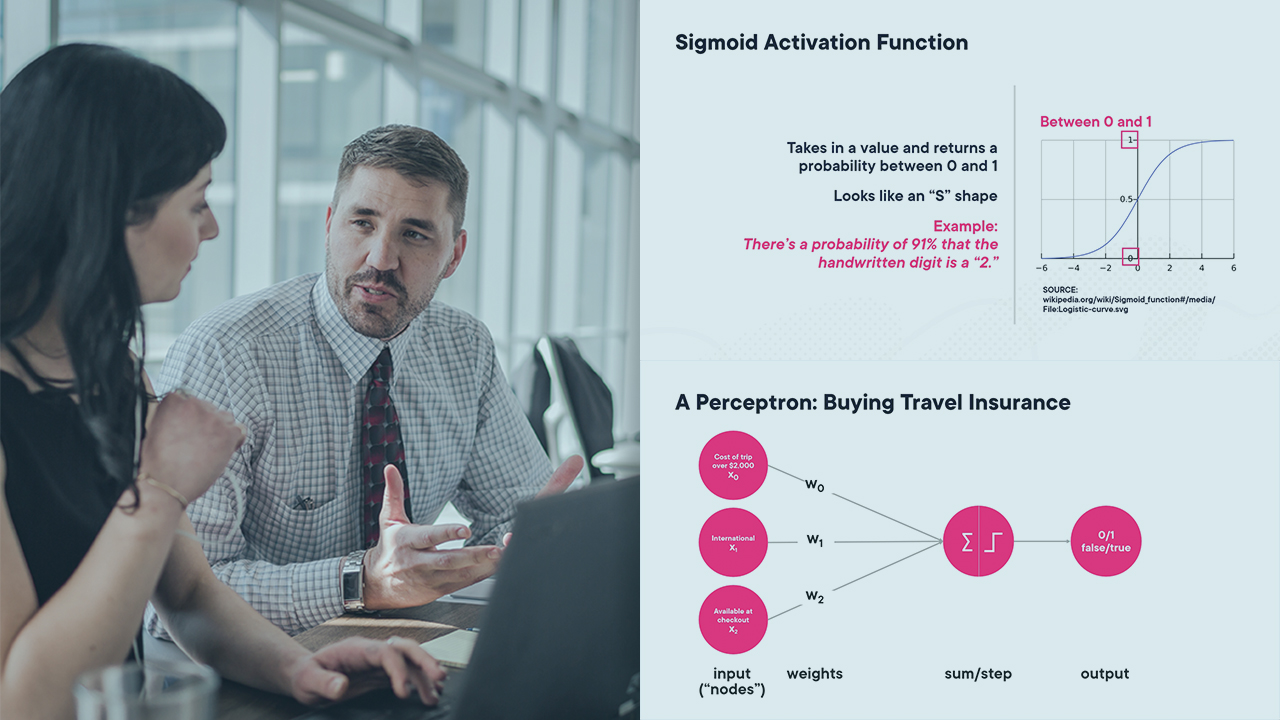

First, you’ll explore network architecture—made up of layers, nodes and activation functions—and compare architecture types.

Next, you’ll discover how neural networks adjust and learn to use backpropagation, gradient descent, loss functions, and learning rates.

Finally, you’ll learn how to implement backpropagation and gradient descent using Python.

When you’re finished with this course, you’ll have the skills and knowledge of neural network architectures and learning needed to build and train a neural network.