- Course

Applying the Lambda Architecture with Spark, Kafka, and Cassandra

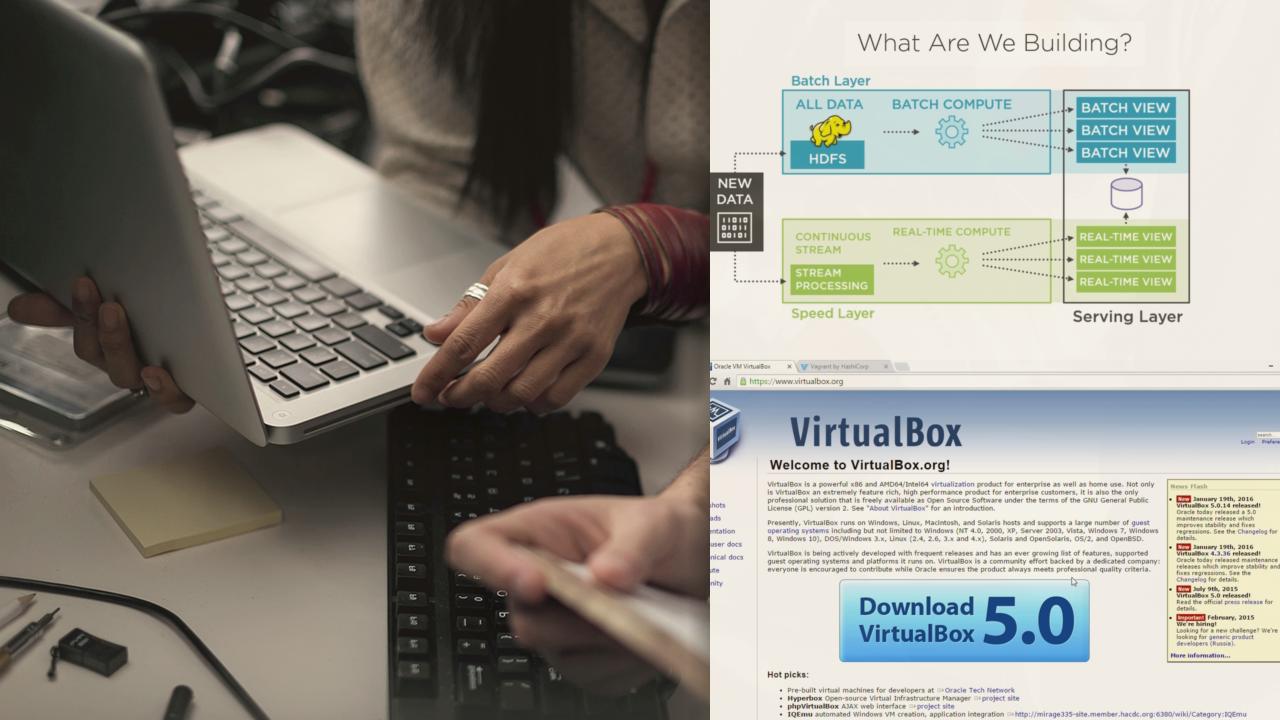

This course introduces how to build robust, scalable, real-time big data systems using a variety of Apache Spark's APIs, including the Streaming, DataFrame, SQL, and DataSources APIs, integrated with Apache Kafka, HDFS and Apache Cassandra.

- Course

Applying the Lambda Architecture with Spark, Kafka, and Cassandra

This course introduces how to build robust, scalable, real-time big data systems using a variety of Apache Spark's APIs, including the Streaming, DataFrame, SQL, and DataSources APIs, integrated with Apache Kafka, HDFS and Apache Cassandra.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Data

What you'll learn

This course aims to get beyond all the hype in the big data world and focus on what really works for building robust, highly-scalable batch and real-time systems. In this course, Applying the Lambda Architecture with Spark, Kafka, and Cassandra, you'll string together different technologies that fit well and have been designed by some of the companies with the most demanding data requirements (such as Facebook, Twitter, and LinkedIn) to companies that are leading the way in the design of data processing frameworks, like Apache Spark, which plays an integral role throughout this course. You'll look at each individual component and work out details about their architecture that make them good fits for building a system based on the Lambda Architecture. You'll continue to build out a full application from scratch, starting with a small application that simulates the production of data in a stream, all the way to addressing global state, non-associative calculations, application upgrades and restarts, and finally presenting real-time and batch views in Cassandra. When you're finished with this course, you'll be ready to hit the ground running with these technologies to build better data systems than ever.

Applying the Lambda Architecture with Spark, Kafka, and Cassandra

-

Defining the Lambda Architecture | 5m 16s

-

What Are We Building? | 2m 15s

-

Setting up Your Environment: Demo | 4m 41s

-

Tools We'll Need: Demo | 4m 16s

-

Installing the Course VM: Demo | 5m 27s

-

Fast Track to Scala: Basics | 6m 29s

-

Fast Track to Scala: Language Features | 11m 41s

-

Fast Track to Scala: Collections | 4m 6s

-

Spark with Zeppelin: Demo | 5m 30s

-

Summary | 1m 54s