- Course

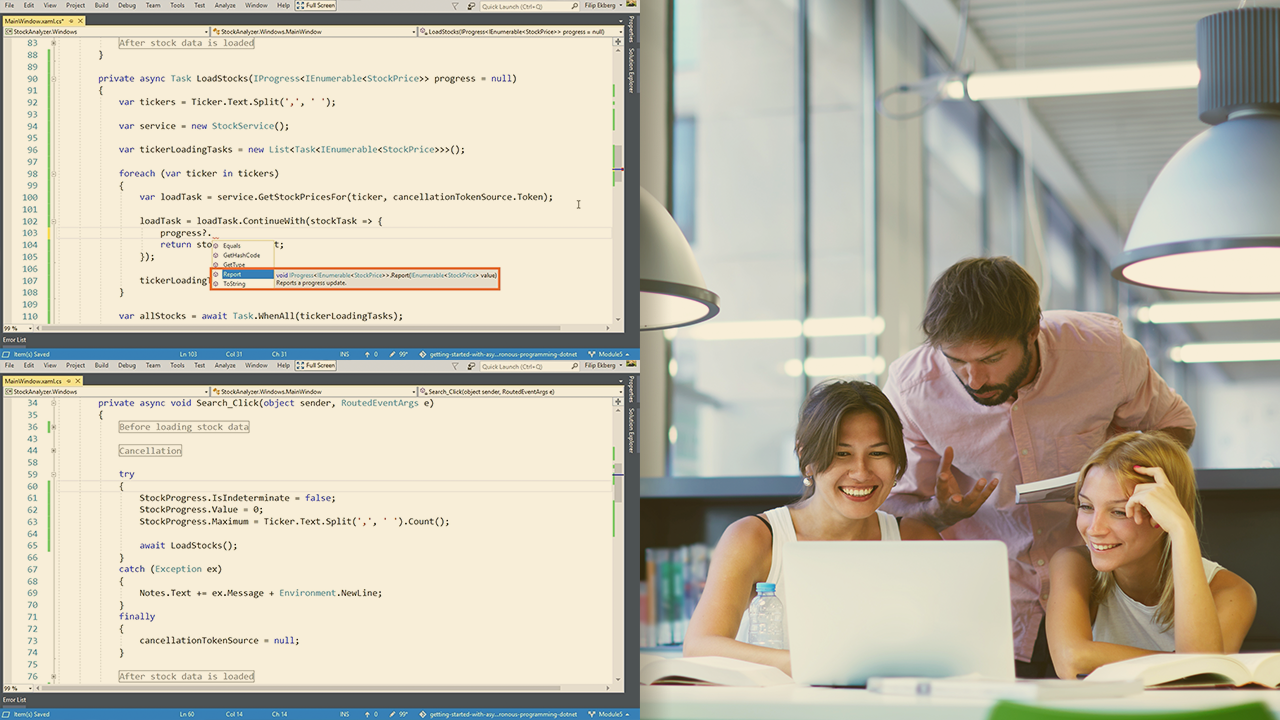

Getting Started with Asynchronous Programming in .NET

Learn how to effectively apply asynchronous principles in any type of .NET application using async and await together with the task parallel library. This course will give you the insight to build fast, powerful, and easy to maintain applications.

- Course

Getting Started with Asynchronous Programming in .NET

Learn how to effectively apply asynchronous principles in any type of .NET application using async and await together with the task parallel library. This course will give you the insight to build fast, powerful, and easy to maintain applications.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Core Tech

What you'll learn

Utilizing asynchronous principles is crucial for building fast and responsive applications. In this course, Getting Started with Asynchronous Programming in .NET, you’ll learn foundational knowledge to efficiently apply the asynchronous principles to build fast and solid applications. First, you’ll explore how the async and await keywords fit into your .NET applications, and how it ties together with the task parallel library. Next, you’ll discover how asynchronous programming is different from parallel programming and how to use the parallel extensions to perform fast computations, which utilizes all your available processing power. Finally, you’ll learn how to adapt in advanced scenarios, and where deeper knowledge of the internals may be required. When you’re finished with this course, you’ll have the skills and knowledge of how to apply the asynchronous programming principles in any type of .NET application.