- Course

Data Literacy: Essentials of Azure Data Factory

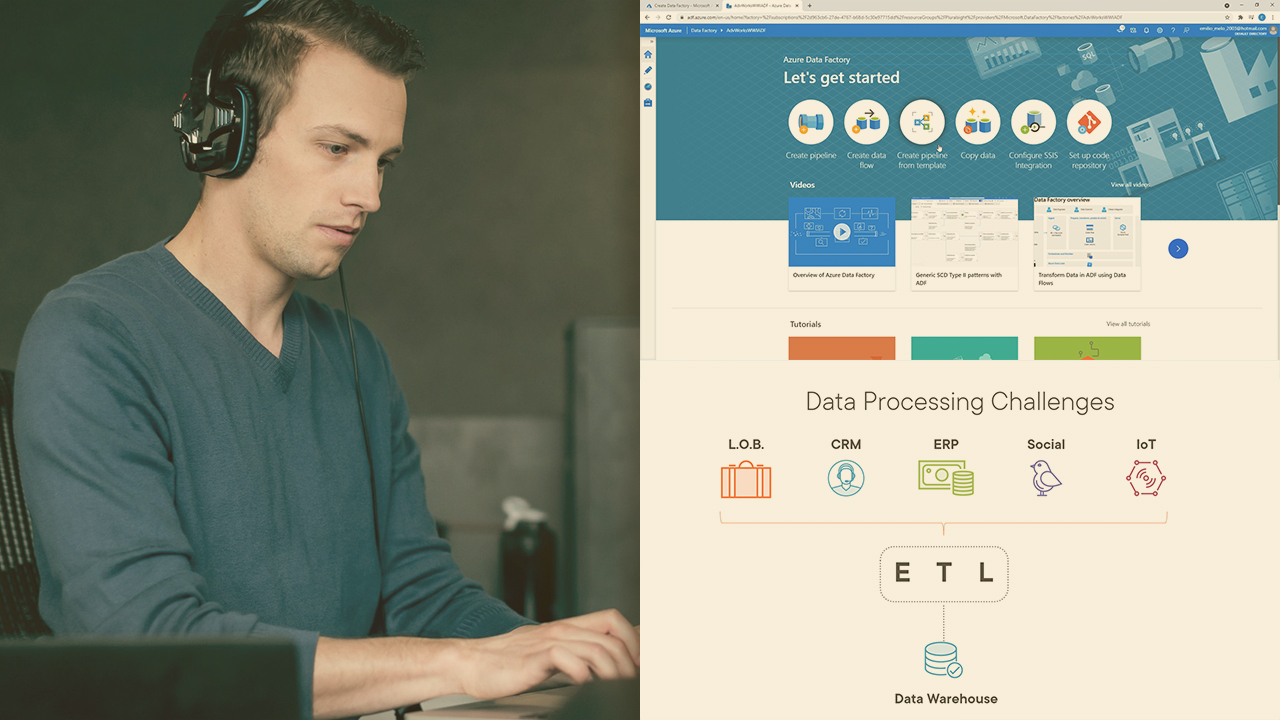

Data Platform is one of the hottest topics in IT right now, as data has become a strategic component in today’s business environment. This course will teach you the fundamentals of Azure Data Factory terms, needed for the exam DP-900.

- Course

Data Literacy: Essentials of Azure Data Factory

Data Platform is one of the hottest topics in IT right now, as data has become a strategic component in today’s business environment. This course will teach you the fundamentals of Azure Data Factory terms, needed for the exam DP-900.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Cloud

- Data

What you'll learn

Data Engineering is one of the hottest topics on the IT industry at the moment, as the velocity, volume, and variety of data nowadays require skills beyond just traditional ETL. The DP-900 exam, as one of the Microsoft certifications on the data platform, is a good entry point for the area. In this course, Data Literacy: Essentials of Azure Data Factory, you’ll learn the main concepts of Data Factory needed for the exam. First, you’ll explore important concepts such as pipelines and activities. Next, you’ll discover other key components of Azure Data Factory, such as integration runtimes and triggers. Finally, you’ll learn how data factory can be used for data ingestion and processing. When you’re finished with this course, you’ll have the skills and knowledge of Azure Data Factory needed to proceed with the DP-900 exam.