- Course

Delta Lake with Azure Databricks: Deep Dive

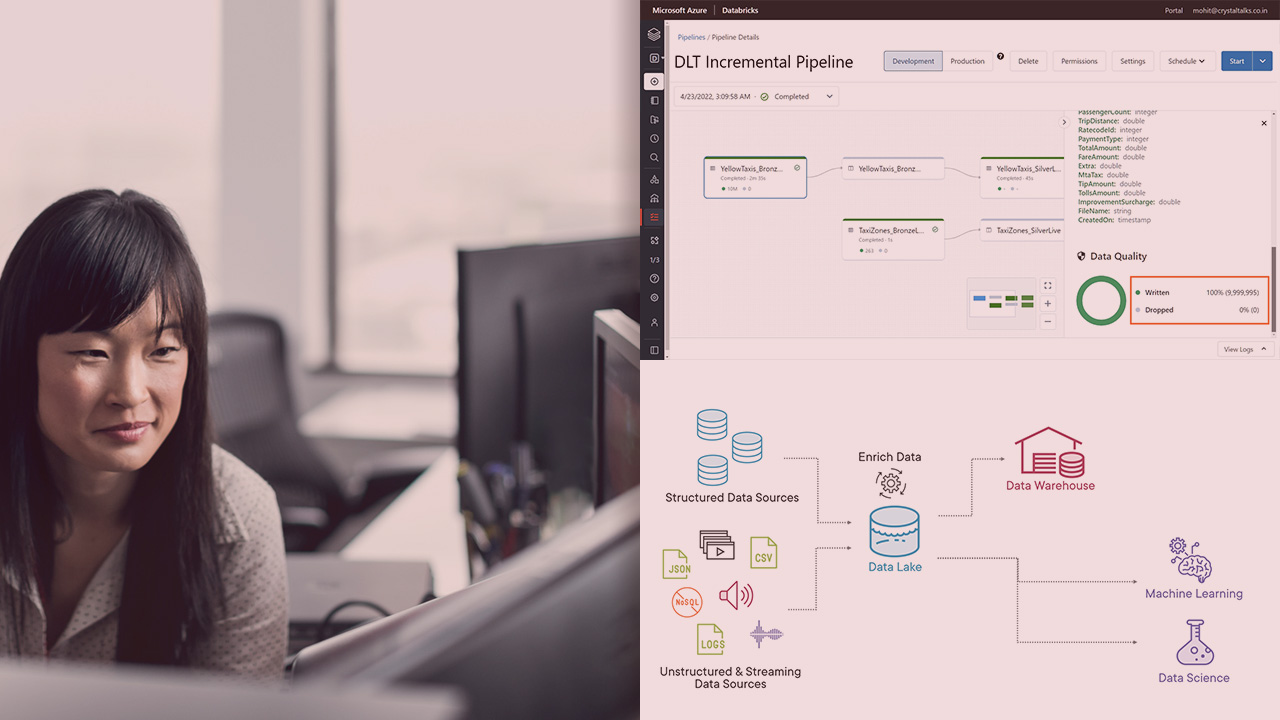

In this course, you'll learn about Delta Lake using Azure Databricks and its ecosystem – Delta Lake Storage, Delta Engine, Delta Architecture, Delta Live Tables, etc. – and how it provides warehouse-like features that you can use to build a Lakehouse.

- Course

Delta Lake with Azure Databricks: Deep Dive

In this course, you'll learn about Delta Lake using Azure Databricks and its ecosystem – Delta Lake Storage, Delta Engine, Delta Architecture, Delta Live Tables, etc. – and how it provides warehouse-like features that you can use to build a Lakehouse.

Get started today

Access this course and other top-rated tech content with one of our business plans.

Try this course for free

Access this course and other top-rated tech content with one of our individual plans.

This course is included in the libraries shown below:

- Cloud

- Data

What you'll learn

Delta Lake is an open-source storage layer that brings reliability to Data Lakes, by providing data warehouse-like features, on top of Data Lake.

It has a big ecosystem, and there are various tools and architectures based on that - Delta Lake Storage, Delta Engine, Delta Architecture, Delta Live Tables, Delta Sharing, etc. It can also handle Batch and Streaming data seamlessly. And these components and features can you help build an optimized, and well-integrated Lakehouse architecture.

In this course, Delta Lake with Azure Databricks: Deep Dive, you’ll learn how Delta Lake and various components in its ecosystem, allows us to build a Lakehouse architecture. And to do that, we will be using Azure Databricks.

First, you’ll learn what Delta Lake is, and how it works. You’ll also see the different components in its ecosystem. Then, you’ll discover how to work with Delta Lake storage and its various features. Next, you’ll see how to handle streaming data on Delta Lake. After, you’ll explore Delta Engine in Databricks to optimize storage and queries. Followed by this, you’ll see how to build a Lakehouse architecture. And you’ll also see how to build reliable ETL pipelines with Delta Live Tables. Finally, you’ll end with some common use cases, and how to implement them. By the end of this course, you’ll have the knowledge and skills to work with Delta Lake and use its ecosystem components to build an optimized, well-integrated Lakehouse solution.